Search This Blog

notes, ramblings, contemplations, transmutations, and otherwise ... on management and directory miscellanea.

Posts

Showing posts from 2014

PowerShell: Retrieve site location of computer object

- Get link

- X

- Other Apps

Preparing for the End of Windows Server 2003

- Get link

- X

- Other Apps

boosting the powershell ise with ise steroids

- Get link

- X

- Other Apps

Microsoft Most Valuable Professional (MVP) 2015

- Get link

- X

- Other Apps

atlanta systems management user group 10.03.14

- Get link

- X

- Other Apps

powershell: limitation on retrieving members of a group

- Get link

- X

- Other Apps

powershell: converting int64 datetime to something legible

- Get link

- X

- Other Apps

enabling deduplication on unnamed volumes (and other stuff)

- Get link

- X

- Other Apps

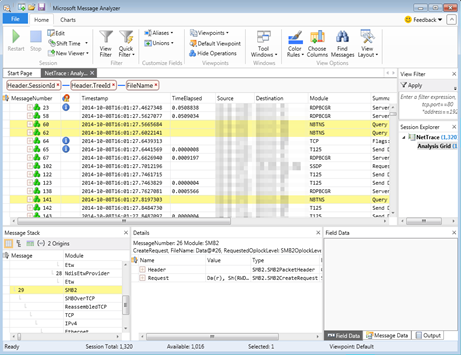

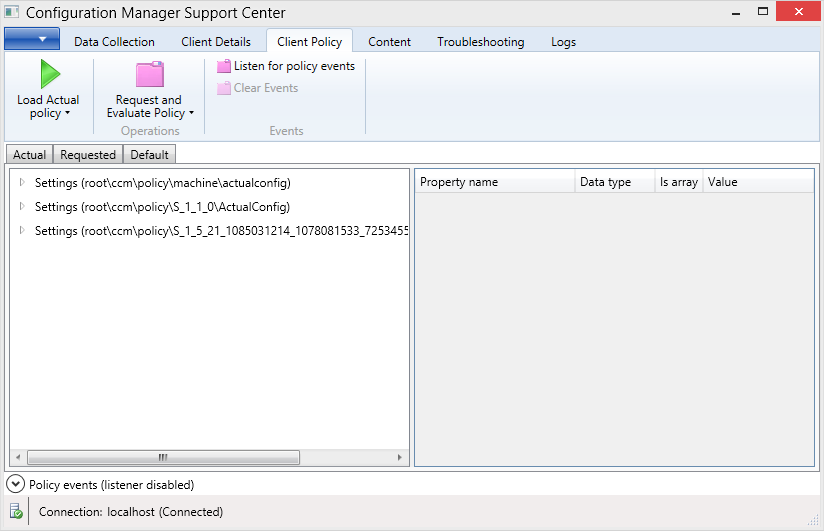

System Center Configuration Manager 2012 Cumulative Update 2

- Get link

- X

- Other Apps

excel: my first use of power query (and i love it)

- Get link

- X

- Other Apps

misc: power savings problem with snagit 12

- Get link

- X

- Other Apps

03.28.2014 atlanta systems management user group

- Get link

- X

- Other Apps